If Amazon Redshift determines that a new distribution style or key will improve the performance of queries,

In this case, Amazon Redshift makes no changes to the table. This clause only supports altering the size of a VARCHAR data type.Ī small table with DISTSTYLE ALL is converted to AUTO(ALL).Ī small table with DISTSTYLE EVEN is converted to AUTO(ALL).Ī small table with DISTSTYLE KEY is converted to AUTO(ALL).Ī large table with DISTSTYLE ALL is converted to AUTO(EVEN).Ī large table with DISTSTYLE EVEN is converted to AUTO(EVEN).Ī large table with DISTSTYLE KEY is converted to AUTO(KEY) and the DISTKEY is preserved. ALTER COLUMN column_name TYPEĪ clause that changes the size of a column defined as a VARCHAR data type. Table name beginning with '#' indicates a temporary table. You can't rename a permanent table to a name that begins with '#'. The maximum table name length is 127 bytes OWNER TO new_ownerĪ clause that changes the owner of the table (or view) to theĪ clause that renames a table (or view) to the value specified in CASCADE is an option for DROP CONSTRAINT. CASCADEĪ clause that removes the specified constraint and anything dependent on RESTRICT is an optionįor DROP CONSTRAINT. To reduce the time to run the ALTER TABLE command, you can combine some clauses ofĪmazon Redshift supports the following combinations of the ALTER TABLE clauses:įrom information_schema.table_constraints RESTRICTĪ clause that removes only the specified constraint. | DROP PARTITION ( partition_column= partition_value ) | ALTER DISTSTYLE KEY DISTKEY column_name

| ALTER COLUMN column_name ENCODE encode_type. | ALTER COLUMN column_name ENCODE encode_type, | ALTER COLUMN column_name ENCODE new_encode_type

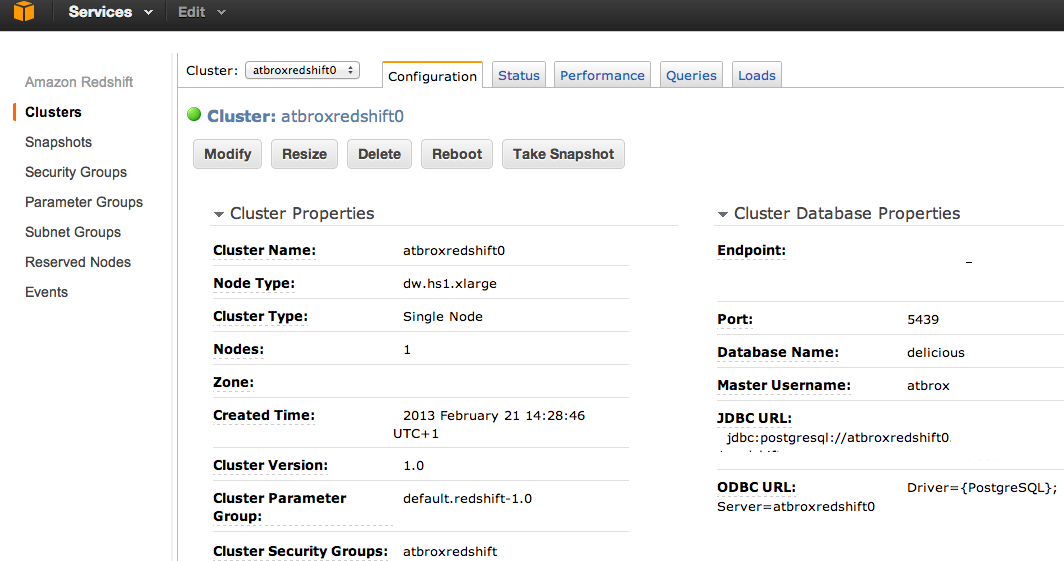

| ALTER COLUMN column_name TYPE updated_varchar_data_type_size Redshift is a knowledge-intensive database, which can handle Big Data in a timely manner when and only when it is operated correctly, and although I may be wrong, I think the people having you perform this work do not understand what they are getting into.Table owner with the USAGE privilege on the schema This is because the sorting value for floats is the integer part of the number, and so all those example numbers have a sorting value of 1 which is to say, if most of your values are like that, min-max culling (the zone map) will have basically no effect, and that will make your queries behave much as they did on MS SQL - the entire column will be scanned - only the and expensive brute power of the hardware in the cluster will provide an improvement in performance.Īh, and, finally, if you insert your data out of order, and you have six billion columns, you'd going to have a multi-day VACUUM after you've finished inserting your data. You cannot chose either of these keys simply by taking a table on its own, without any consideration for how the table will be queried.Īs an aside, I would strongly advise you, if your example value column here is representative, to convert your value column to an integer, by multiplying its values by 1000, and converting it back to a float when you need to use it. The choice of distribution key and sort key are driven by the queries you intend to issue on the table, for the choice made defines what queries can be issued in a timely manner on the table, and you need those queries to be the business queries you need to run.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed